LLM AI-Generated Text Classification

Detecting AI-Generated Content and Enhancing Robustness

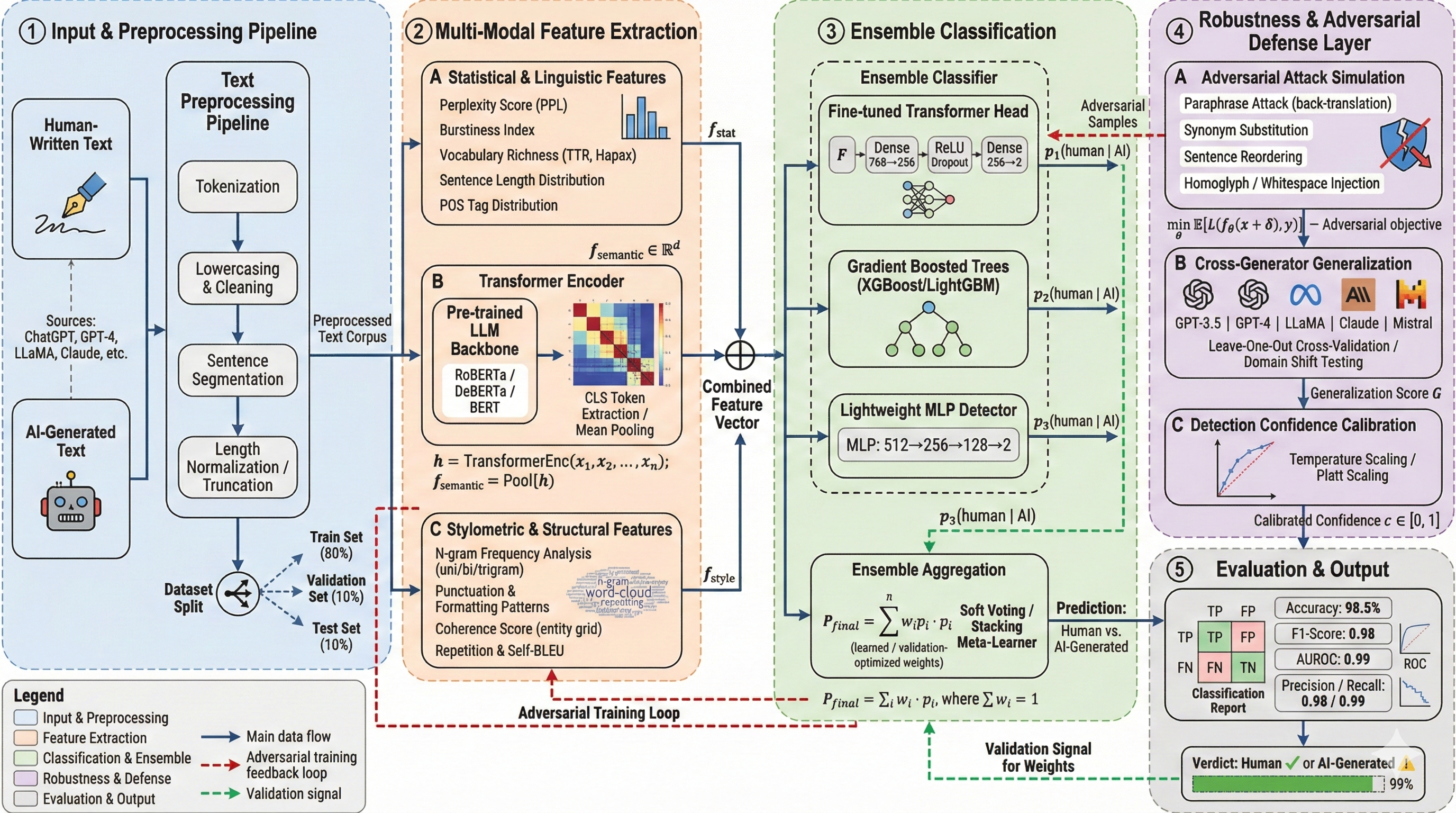

Overview

This open research project, conducted in collaboration with the Vision and Language Group, focuses on developing robust methods for identifying AI-generated text and enhancing system resilience against artificially generated content.

Project Details

Duration: December 2023 – January 2024

Collaboration: Vision and Language Group

Code Repository: GitHub

Research Objectives

Primary Goals

- Detection Accuracy: Develop highly accurate classifiers to distinguish between human and AI-generated text

- Robustness Enhancement: Build systems resistant to adversarial attacks and evasion techniques

- Generalization: Create models that work across different AI text generation systems

Technical Approach

Machine Learning Methods

- Implemented advanced NLP techniques for text analysis

- Developed feature extraction methods specific to AI-generated content patterns

- Applied ensemble learning approaches for improved detection accuracy

Robustness Strategies

- Investigated adversarial robustness against sophisticated evasion attempts

- Developed techniques to handle evolving AI generation methods

- Implemented cross-domain validation for generalization

Key Challenges Addressed

- Evolving AI Models: Adapting to increasingly sophisticated text generation models

- Subtle Patterns: Detecting subtle linguistic markers that distinguish AI from human text

- Domain Adaptation: Ensuring performance across different text types and domains

- Adversarial Robustness: Maintaining accuracy against deliberate evasion attempts

Applications

Academic Integrity

Supporting educational institutions in maintaining academic honesty by detecting AI-assisted submissions.

Content Verification

Helping platforms and organizations verify the authenticity of textual content.

Research Tool

Providing researchers with reliable methods to study AI-generated content in various contexts.

Impact

This project contributes to the growing field of AI content detection, addressing critical challenges in maintaining trust and authenticity in digital communications and content creation.

This research addresses the important societal challenge of distinguishing between human and AI-generated content in an era of increasingly sophisticated language models.